Maxim Bonnaerens

Hi, I’m Maxim Bonnaerens. I am a researcher in Deep Learning with a focus on Computer Vision. On this site you can find ML related flash cards, blog posts and paper publications. Currently, I am enrolled as a Machine Learning engineer at RoboJob. I obtained a PhD in resource-efficient machine learning at Ghent University in 2023.

Latest Flashcards

Recent Posts

Projects

Featured Publications

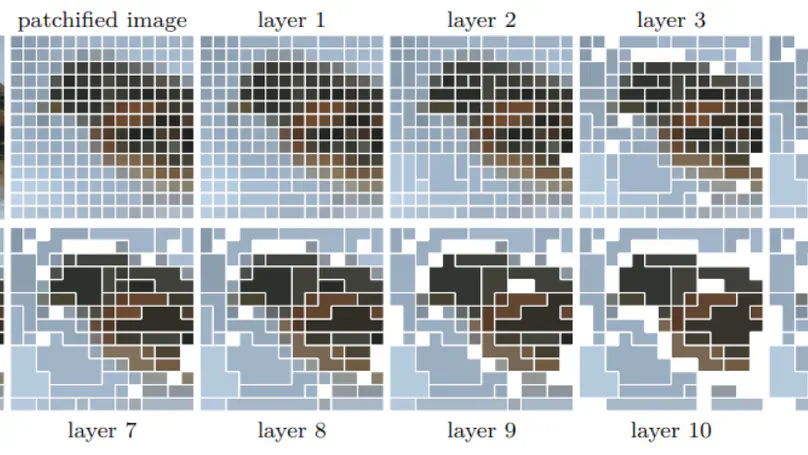

Learned Threshold Token Merging and Pruning for Vision Transformers

Vision transformers have demonstrated remarkable success in a wide range of computer vision tasks over the last years. However, their high computational costs remain a significant barrier to their practical deployment. In particular, the complexity of transformer models is quadratic with respect to the number of input tokens. Therefore techniques that reduce the number of input tokens that need to be processed have been proposed. This paper introduces Learned Thresholds token Merging and Pruning (LTMP), a novel approach that leverages the strengths of both token merging and token pruning. LTMP uses learned threshold masking modules that dynamically determine which tokens to merge and which to prune. We demonstrate our approach with extensive experiments on vision transformers on the ImageNet classification task. Our results demonstrate that LTMP achieves state-of-the-art accuracy across reduction rates while requiring only a single fine-tuning epoch, which is an order of magnitude faster than previous methods.

Anchor pruning for object detection

This paper proposes anchor pruning for object detection in one-stage anchor-based detectors. While pruning techniques are widely used to reduce the computational cost of convolutional neural networks, they tend to focus on optimizing the backbone networks where often most computations are. In this work we demonstrate an additional pruning technique, specifically for object detection: anchor pruning. With more efficient backbone networks and a growing trend of deploying object detectors on embedded systems where post-processing steps such as non-maximum suppression can be a bottleneck, the impact of the anchors used in the detection head is becoming increasingly more important. In this work, we show that many anchors in the object detection head can be removed without any loss in accuracy. With additional retraining, anchor pruning can even lead to improved accuracy. Extensive experiments on SSD and MS COCO show that the detection head can be made up to 44% more efficient while simultaneously increasing accuracy. Further experiments on RetinaNet and PASCAL VOC show the general effectiveness of our approach. We also introduce ‘overanchorized’ models that can be used together with anchor pruning to eliminate hyperparameters related to the initial shape of anchors. Code and models are available at https://github.com/Mxbonn/anchor_pruning.